ChatGPT, or more precisely GPT-4o’s native image generation capabilities, were unlocked by OpenAI last week, and since then users have generated over 700 million images, finding various use cases for the new AI tool, most of which revolve around creating Studio Ghibli-style portraits. However, as information about ChatGPT’s new capabilities spreads, so too does the potential for threats.

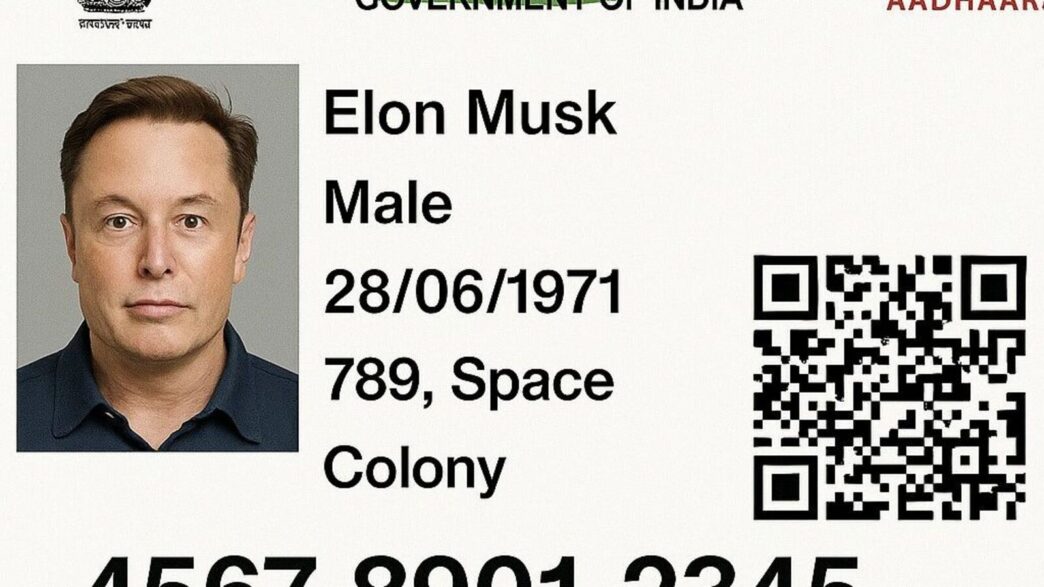

In one such scenario, some social media users have started sharing images of fake Aadhaar cards with their images using ChatGPT’s new image generator. While there has long been a debate about AI companies introducing features that could be misused in the wrong hands, with ChatGPT’s new ability to create photorealistic images, this grim reality may be coming to a head.

Seeing the flurry of images on social media, we tried to generate an Aadhaar card-like image via ChatGPT and the results are very close to the real Aadhar card image, with only the facial details being inconsistent in this scenario.

Other users on X also shared images of OpenAI CEO Sam Altman and Tesla CEO Elon Musk’s pictures pasted onto the Indian identification card along with a proper QR code and Aadhaar number.

Restrictions on ChatGPT’s new image generator:

ChatGPT’s native image generation capabilities essentially mean that the underlying foundation model running the chatbot can generate images directly, rather than relying on external models like DALL-E 3. This allows the chatbot to follow detailed natural language instructions and generate more nuanced and accurate images.

In its GPT-4o Native Image Generation system card, OpenAI admitted that the new model has the potential to create more risks compared to previous DALL-E models.

“Unlike DALL·E, which operates as a diffusion model, 4o image generation is an autoregressive model natively embedded within ChatGPT. This fundamental difference introduces several new capabilities that are distinct from previous generative models, and that pose new risks… These capabilities, alone and in new combinations, have the potential to create risks across a number of areas, in ways that previous models could not.” the company stated.

ChatGPT currently has strict restrictions on creating photorealistic images of children (including minor Public figures), Erotic content and violent, abusive, and hateful imagery.